Foundation models for generalist medical artificial intelligence

Bommasani, R. et al. On the opportunities and risks of foundation models. Preprint at (2022).

Reed, S. et al. A generalist agent. In Transactions on Machine Learning Research (2022). This study presented Gato, a generalist model that can carry out a variety of tasks across modalities such as chatting, captioning images, playing video games and controlling a robot arm.

Alayrac, J.-B. et al. Flamingo: a Visual Language Model for few-shot learning. In Advances in Neural Information Processing Systems (eds Oh, A. H. et al.) 35, 23716–23736 (2022).

Lu, J., Clark, C., Zellers, R., Mottaghi, R. & Kembhavi, A. Unified-IO: a unified model for vision, language, and multi-modal tasks. Preprint at (2022).

Brown, T. et al. Language models are few-shot learners. In Advances in Neural Information Processing Systems (eds Larochelle, H. et al.) 33, 1877–1901 (2020). This study presented the language model GPT-3 and discovered that large language models can carry out in-context learning.

Aghajanyan, A. et al. CM3: a causal masked multimodal model of the Internet. Preprint at (2022).

Wei, J. et al. Emergent abilities of large language models. In Transactions on Machine Learning Research (2022).

Steinberg, E. et al. Language models are an effective representation learning technique for electronic health record data. J. Biomed. Inform. 113, 103637 (2021).

Google Scholar

Tiu, E. et al. Expert-level detection of pathologies from unannotated chest X-ray images via self-supervised learning. Nat. Biomed. Eng. 6, 1399–1406 (2022). This study demonstrated that CheXzero—an early example of a foundation model in medical AI—can detect diseases on chest X-rays without explicit annotation by learning from natural-language descriptions contained in accompanying clinical reports.

Singhal, K. et al. Large language models encode clinical knowledge. Preprint at (2022). This study demonstrated that the language model Flan-PaLM achieves a passing score (67.6%) on a dataset of US Medical Licensing Examination questions and proposed Med-PaLM, a medical variant of Flan-PaLM with improved clinical reasoning and comprehension.

Yang, X. et al. A large language model for electronic health records. npj Digit. Med. 5, 194 (2022).

Google Scholar

Food and Drug Administration. Artificial intelligence and machine learning (AI/ML)-enabled medical devices. FDA (2022).

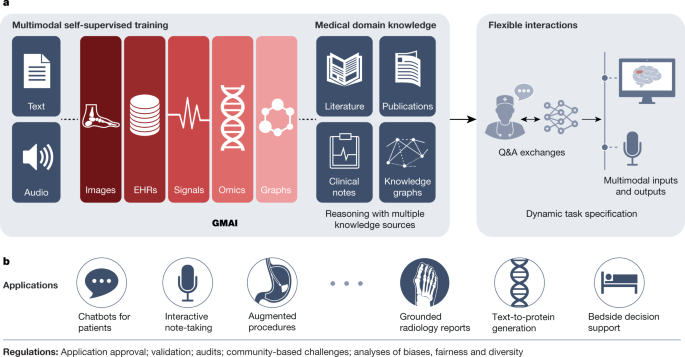

Acosta, J. N., Falcone, G. J., Rajpurkar, P. & Topol, E. J. Multimodal biomedical AI. Nat. Med. 28, 1773–1784 (2022).

Google Scholar

Krishnan, R., Rajpurkar, P. & Topol, E. J. Self-supervised learning in medicine and healthcare. Nat. Biomed. Eng. 6, 1346–1352 (2022).

Google Scholar

Devlin, J., Chang, M.-W., Lee, K. & Toutanova, K. BERT: pre-training of deep bidirectional transformers for language understanding. In Proc. 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (eds Burstein, J., Doran, C. & Solorio, T.) 1, 4171–4186 (2019). This paper introduced masked language modelling, a widely used technique for training language models where parts of a text sequence are hidden (masked) in order for the model to fill in the blanks. This strategy can be extended beyond text to other data types.

Radford, A. et al. Learning transferable visual models from natural language supervision. In Proc. 38th Int. Conference on Machine Learning (eds Meila, M. & Zhang, T.) 139, 8748–8763 (2021). This paper introduced contrastive language–image pretraining (CLIP), a multimodal approach that enabled a model to learn from images paired with raw text.

Zhang, X.-A. et al. A zoonotic henipavirus in febrile patients in China. N. Engl. J. Med. 387, 470–472 (2022).

Google Scholar

Vaswani, A. et al. Attention is all you need. In Advances in Neural Information Processing Systems (eds Guyon, I. et al.) 30, 5998–6008 (2017). This paper introduced the transformer architecture, a key breakthrough that ultimately led to the development of large-scale foundation models.

Borgeaud, S. et al. Improving language models by retrieving from trillions of tokens. In Proc. 39th Int. Conference on Machine Learning (eds Chaudhuri, K. et al.) 162, 2206–2240 (2022).

Guu, K., Lee, K., Tung, Z., Pasupat, P. & Chang, M.-W. REALM: retrieval-augmented language model pre-training. In Proc. 37th Int. Conference on Machine Learning (eds Daumé, H. & Singh, A.) 119, 3929–3938 (2020).

Igelström, E. et al. Causal inference and effect estimation using observational data. J. Epidemiol. Community Health 76, 960–966 (2022).

Google Scholar

Wang, Q., Huang, K., Chandak, P., Zitnik, M. & Gehlenborg, N. Extending the nested model for user-centric XAI: a design study on GNN-based drug repurposing. IEEE Trans. Vis. Comput. Graph. 29, 1266–1276 (2023).

Google Scholar

Li, J. et al. Align before fuse: vision and language representation learning with momentum distillation. In Advances in Neural Information Processing Systems (eds Ranzato, M. et al.) 34, 9694–9705 (2021).

Wang, Z. et al. SimVLM: simple visual language model pretraining with weak supervision. In Int. Conference on Learning Representations (eds Hofmann, K. & Rush, A.) (2022).

Yasunaga, M. et al. Deep bidirectional language-knowledge graph pretraining. In Advances in Neural Information Processing Systems (eds Oh, A. H. et al.) 35 (2022).

Yasunaga, M., Ren, H., Bosselut, A., Liang, P. & Leskovec, J. QA-GNN: reasoning with language models and knowledge graphs for question answering. In Proc. 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (eds Toutanova, K. et al.) 535–546 (2021).

Guha Roy, A. et al. Does your dermatology classifier know what it doesn’t know? Detecting the long-tail of unseen conditions. Med. Image Anal. 75, 102274 (2022).

Google Scholar

Radford, A. et al. Robust speech recognition via large-scale weak supervision. Preprint at (2022).

Dixon, R. F. et al. A virtual type 2 diabetes clinic using continuous glucose monitoring and endocrinology visits. J. Diabetes Sci. Technol. 14, 908–911 (2020).

Google Scholar

Kucera, T., Togninalli, M. & Meng-Papaxanthos, L. Conditional generative modeling for de novo protein design with hierarchical functions. Bioinformatics 38, 3454–3461 (2022).

Google Scholar

Rombach, R., Blattmann, A., Lorenz, D., Esser, P. & Ommer, B. High-resolution image synthesis with latent diffusion models. In Proc. IEEE/CVF Conference on Computer Vision and Pattern Recognition (eds Chellappa, R. et al.) 10684–10695 (2022).

Ramesh, A. et al. Zero-shot text-to-image generation. In Proc. 38th Int. Conference on Machine Learning (eds Meila, M. & Zhang, T.) 139, 8821–8831 (2021).

Jumper, J. et al. Highly accurate protein structure prediction with AlphaFold. Nature 596, 583–589 (2021).

Google Scholar

Zvyagin, M. et al. GenSLMs: genome-scale language models reveal SARS-CoV-2 evolutionary dynamics. Preprint at bioRxiv (2022).

Watson, J. L. et al. Broadly applicable and accurate protein design by integrating structure prediction networks and diffusion generative models. Preprint at bioRxiv (2022).

The UniProt Consortium. UniProt: the universal protein knowledgebase. Nucleic Acids Res. 45, D158–D169 (2017).

Google Scholar

Guo, L. L. et al. Systematic review of approaches to preserve machine learning performance in the presence of temporal dataset shift in clinical medicine. Appl. Clin. Inform. 12, 808–815 (2021).

Google Scholar

Finlayson, S. G. et al. The clinician and dataset shift in artificial intelligence. N. Engl. J. Med. 385, 283–286 (2021).

Google Scholar

Lampinen, A. K. et al. Can language models learn from explanations in context? In Findings of the Association for Computational Linguistics: EMNLP 2022 (eds Goldberg, Y., Kozareva, Z. & Zhang, Y.) 537–563 (2022).

Yoon, S. H., Lee, J. H. & Kim, B.-N. Chest CT findings in hospitalized patients with SARS-CoV-2: Delta versus Omicron variants. Radiology 306, 252–260 (2023).

Google Scholar

Ouyang, L. et al. Training language models to follow instructions with human feedback. In Advances in Neural Information Processing Systems (eds Oh, A. H. et al.) 35, 27730–27744 (2022).

Pilipiszyn, A. GPT-3 powers the next generation of apps. OpenAI (2021).

Burns, C., Ye, H., Klein, D. & Steinhardt, J. Discovering latent knowledge in language models without supervision. Preprint at (2022).

Obermeyer, Z., Powers, B., Vogeli, C. & Mullainathan, S. Dissecting racial bias in an algorithm used to manage the health of populations. Science 366, 447–453 (2019).

Google Scholar

Sex and Gender Bias in Technology and Artificial Intelligence: Biomedicine and Healthcare Applications (Academic, 2022).

Srivastava, A. et al. Beyond the imitation game: quantifying and extrapolating the capabilities of language models. Preprint at (2022).

Carlini, N. et al. Extracting training data from large language models. In Proc. 30th USENIX Security Symposium (eds Bailey, M. & Greenstadt, R.) 6, 2633–2650 (2021).

Branch, H. J. et al. Evaluating the susceptibility of pre-trained language models via handcrafted adversarial examples. Preprint at (2022).

Chowdhery, A. et al. PaLM: scaling language modeling with pathways. Preprint at (2022).

Zhang, S. et al. OPT: open pre-trained transformer language models. Preprint at (2022).

Hoffmann, J. et al. An empirical analysis of compute-optimal large language model training. In Advances in Neural Information Processing Systems (eds Oh, A. H. et al.) 35, 30016–30030 (2022).

Chung, H. W. et al. Scaling instruction-finetuned language models. Preprint at (2022).

Kung, T. H. et al. Performance of ChatGPT on USMLE: potential for AI-assisted medical education using large language models. PLoS Dig. Health 2, 2 (2023).

Huang, S.-C., Shen, L., Lungren, M. P. & Yeung, S. GLoRIA: a multimodal global-local representation learning framework for label-efficient medical image recognition. In Proc. IEEE/CVF Int. Conference on Computer Vision (eds Brown, M. S. et al.) 3942–3951 (2021).

Johnson, A. E. W. et al. MIMIC-IV, a freely accessible electronic health record dataset. Sci. Data 10, 1 (2023).

Google Scholar

Sudlow, C. et al. UK Biobank: an open access resource for identifying the causes of a wide range of complex diseases of middle and old age. PLoS Med. 12, e1001779 (2015).

Google Scholar

Gou, J., Yu, B., Maybank, S. J. & Tao, D. Knowledge distillation: a survey. Int. J. Comput. Vis. 129, 1789–1819 (2021).

Google Scholar

Vegunta, R., Vegunta, R. & Kutti Sridharan, G. Secondary aortoduodenal fistula presenting as gastrointestinal bleeding and fungemia. Cureus 11, e5575 (2019).

Google Scholar

link