A systematic review and meta-analysis of diagnostic performance comparison between generative AI and physicians

Radford, A., Narasimhan, K., Salimans, T. & Sutskever, I. Improving Language Understanding by Generative Pre-training. (2018).

Brown, T. et al. Language models are few-shot learners. Adv. Neural Inf. Process. Syst. 33, 1877–1901 (2020).

OpenAI et al. GPT-4 technical report. Preprint at arXiv (2023).

Touvron, H. et al. LLaMA: open and efficient foundation language models. Preprint at arXiv (2023).

Touvron, H. et al. Llama 2: open foundation and fine-tuned chat models. Preprint at arXiv (2023).

Chowdhery, A. et al. PaLM: scaling language modeling with pathways. J. Mach. Learn. Res. 24, 1–113 (2023).

Anil, R. et al. PaLM 2 technical report. Preprint at arXiv (2023).

Thoppilan, R. et al. LaMDA: language models for dialog applications. Preprint at arXiv (2022).

Thirunavukarasu, A. J. et al. Large language models in medicine. Nat. Med. 29, 1930–1940 (2023).

Google Scholar

Singhal, K. et al. Large language models encode clinical knowledge. Nature 620, 172–180 (2023).

Google Scholar

Ueda, D. et al. ChatGPT’s diagnostic performance from patient history and imaging findings on the diagnosis please quizzes. Radiology 308, e231040 (2023).

Google Scholar

Kanjee, Z., Crowe, B. & Rodman, A. Accuracy of a generative artificial intelligence model in a complex diagnostic challenge. JAMA 330, 78–80 (2023).

Google Scholar

Hirosawa, T., Mizuta, K., Harada, Y. & Shimizu, T. Comparative evaluation of diagnostic accuracy between Google Bard and physicians. Am. J. Med. 136, 1119–1123.e18 (2023).

Google Scholar

Shea, Y.-F., Lee, C. M. Y., Ip, W. C. T., Luk, D. W. A. & Wong, S. S. W. Use of GPT-4 to analyze medical records of patients with extensive investigations and delayed diagnosis. JAMA Netw. Open 6, e2325000 (2023).

Google Scholar

Chee, J., Kwa, E. D. & Goh, X. Vertigo, likely peripheral’: the dizzying rise of ChatGPT. Eur. Arch. Otorhinolaryngol. 280, 4687–4689 (2023).

Google Scholar

Lyons, R. J., Arepalli, S. R., Fromal, O., Choi, J. D. & Jain, N. Artificial intelligence chatbot performance in triage of ophthalmic conditions. Can. J. Ophthalmol. (2023).

Benoit, J. R. A. ChatGPT for clinical vignette generation, revision, and evaluation. Preprint at medRxiv (2023).

Hirosawa, T. et al. ChatGPT-generated differential diagnosis lists for complex case-derived clinical vignettes: diagnostic accuracy evaluation. JMIR Med. Inf. 11, e48808 (2023).

Google Scholar

Hirosawa, T. et al. Diagnostic accuracy of differential-diagnosis lists generated by generative pretrained transformer 3 chatbot for clinical vignettes with common chief complaints: a pilot study. Int. J. Environ. Res. Public Health 20, 3378 (2023).

Google Scholar

Wei, Q., Cui, Y., Wei, B., Cheng, Q. & Xu, X. Evaluating the performance of ChatGPT in differential diagnosis of neurodevelopmental disorders: a pediatricians-machine comparison. Psychiatry Res. 327, 115351 (2023).

Google Scholar

Allahqoli, L., Ghiasvand, M. M., Mazidimoradi, A., Salehiniya, H. & Alkatout, I. Diagnostic and management performance of ChatGPT in obstetrics and gynecology. Gynecol. Obstet. Invest. 88, 310–313 (2023).

Google Scholar

Levartovsky, A., Ben-Horin, S., Kopylov, U., Klang, E. & Barash, Y. Towards AI-augmented clinical decision-making: an examination of ChatGPT’s utility in acute ulcerative colitis presentations. Am. J. Gastroenterol. 118, 2283–2289 (2023).

Google Scholar

Bushuven, S. et al. ‘ChatGPT, can you help me save my child’s life?’—diagnostic accuracy and supportive capabilities to lay rescuers by ChatGPT in prehospital basic life support and paediatric advanced life support cases—an in-silico analysis. J. Med. Syst. 47, 123 (2023).

Google Scholar

Knebel, D. et al. Assessment of ChatGPT in the prehospital management of ophthalmological emergencies—an analysis of 10 fictional case vignettes. Klin. Monbl. Augenheilkd. (2023).

Pillai, J. & Pillai, K. Accuracy of generative artificial intelligence models in differential diagnoses of familial Mediterranean fever and deficiency of Interleukin-1 receptor antagonist. J. Transl. Autoimmun. 7, 100213 (2023).

Google Scholar

Ito, N. et al. The accuracy and potential racial and ethnic biases of GPT-4 in the diagnosis and triage of health conditions: evaluation study. JMIR Med. Educ. 9, e47532 (2023).

Google Scholar

Sorin, V. et al. GPT-4 multimodal analysis on ophthalmology clinical cases including text and images. Preprint at medRxiv (2023).

Madadi, Y. et al. ChatGPT assisting diagnosis of neuro-ophthalmology diseases based on case reports. Preprint at medRxiv (2023).

Schubert, M. C., Lasotta, M., Sahm, F., Wick, W. & Venkataramani, V. Evaluating the multimodal capabilities of generative AI in complex clinical diagnostics. Preprint at medRxiv (2023).

Kiyohara, Y. et al. Large language models to differentiate vasospastic angina using patient information. Preprint at medRxiv (2023).

Sultan, I. et al. Using ChatGPT to predict cancer predisposition genes: a promising tool for pediatric oncologists. Cureus 15, e47594 (2023).

Google Scholar

Horiuchi, D. et al. Accuracy of ChatGPT generated diagnosis from patient’s medical history and imaging findings in neuroradiology cases. Neuroradiology 66, 73–79 (2023).

Google Scholar

Stoneham, S., Livesey, A., Cooper, H. & Mitchell, C. Chat GPT vs clinician: challenging the diagnostic capabilities of A.I. In dermatology. Clin. Exp. Dermatol. (2023).

Rundle, C. W., Szeto, M. D., Presley, C. L., Shahwan, K. T. & Carr, D. R. Analysis of ChatGPT generated differential diagnoses in response to physical exam findings for benign and malignant cutaneous neoplasms. J. Am. Acad. Dermatol. (2023).

Rojas-Carabali, W. et al. Chatbots Vs. human experts: evaluating diagnostic performance of chatbots in uveitis and the perspectives on AI adoption in ophthalmology. Ocul. Immunol. Inflamm. 32, 1591–1598 (2024).

Fraser, H. et al. Comparison of diagnostic and triage accuracy of Ada Health and WebMD symptom checkers, ChatGPT, and physicians for patients in an emergency department: clinical data analysis study. JMIR Mhealth Uhealth 11, e49995 (2023).

Google Scholar

Krusche, M., Callhoff, J., Knitza, J. & Ruffer, N. Diagnostic accuracy of a large language model in rheumatology: comparison of physician and ChatGPT-4. Rheumatol. Int. (2023).

Galetta, K. & Meltzer, E. Does GPT-4 have neurophobia? Localization and diagnostic accuracy of an artificial intelligence-powered chatbot in clinical vignettes. J. Neurol. Sci. 453, 120804 (2023).

Google Scholar

Delsoz, M. et al. The use of ChatGPT to assist in diagnosing glaucoma based on clinical case reports. Ophthalmol. Ther. 12, 3121–3132 (2023).

Google Scholar

Hu, X. et al. What can GPT-4 do for diagnosing rare eye diseases? A pilot study. Ophthalmol. Ther. 12, 3395–3402 (2023).

Google Scholar

Abi-Rafeh, J., Hanna, S., Bassiri-Tehrani, B., Kazan, R. & Nahai, F. Complications following facelift and neck lift: implementation and assessment of large language model and artificial intelligence (ChatGPT) performance across 16 simulated patient presentations. Aesthetic Plast. Surg. 47, 2407–2414 (2023).

Google Scholar

Koga, S., Martin, N. B. & Dickson, D. W. Evaluating the performance of large language models: ChatGPT and Google Bard in generating differential diagnoses in clinicopathological conferences of neurodegenerative disorders. Brain Pathol. 34, e13207 (2023).

Xv, Y., Peng, C., Wei, Z., Liao, F. & Xiao, M. Can Chat-GPT a substitute for urological resident physician in diagnosing diseases?: a preliminary conclusion from an exploratory investigation. World J. Urol. 41, 2569–2571 (2023).

Google Scholar

Senthujan, S. M. et al. GPT-4V(ision) unsuitable for clinical care and education: a clinician-evaluated assessment. Preprint at medRxiv (2023).

Mori, Y., Izumiyama, T., Kanabuchi, R., Mori, N. & Aizawa, T. Large language model may assist diagnosis of SAPHO syndrome by bone scintigraphy. Mod. Rheumatol. 34, 1043–1046 (2024).

Mykhalko, Y., Kish, P., Rubtsova, Y., Kutsyn, O. & Koval, V. From text to diagnose: ChatGPT’S efficacy in medical decision-making. Wiad. Lek. 76, 2345–2350 (2023).

Google Scholar

Andrade-Castellanos, C. A., Paz, M. T. T.l.a. & Farfán-Flores, P. E. Accuracy of ChatGPT for the diagnosis of clinical entities in the field of internal medicine. Gac. Med. Mex. 159, 439–442 (2023).

Google Scholar

Daher, M. et al. Breaking barriers: can ChatGPT compete with a shoulder and elbow specialist in diagnosis and management? JSES Int. 7, 2534–2541 (2023).

Google Scholar

Suthar, P. P., Kounsal, A., Chhetri, L., Saini, D. & Dua, S. G. Artificial intelligence (AI) in radiology: a deep dive into ChatGPT 4.0’s accuracy with the American Journal of Neuroradiology’s (AJNR) ‘Case of the Month’. Cureus 15, e43958 (2023).

Google Scholar

Nakaura, T. et al. Preliminary assessment of automated radiology report generation with generative pre-trained transformers: comparing results to radiologist-generated reports. Jpn. J. Radiol. 15, 1–11 (2023).

Berg, H. T. et al. ChatGPT and generating a differential diagnosis early in an emergency department presentation. Ann. Emerg. Med. 83, 83–86 (2024).

Google Scholar

Gebrael, G. et al. Enhancing triage efficiency and accuracy in emergency rooms for patients with metastatic prostate cancer: a retrospective analysis of artificial intelligence-assisted triage using ChatGPT 4.0. Cancers 15, 3717 (2023).

Google Scholar

Ravipati, A., Pradeep, T. & Elman, S. A. The role of artificial intelligence in dermatology: the promising but limited accuracy of ChatGPT in diagnosing clinical scenarios. Int. J. Dermatol. 62, e547–e548 (2023).

Google Scholar

Shikino, K. et al. Evaluation of ChatGPT-generated differential diagnosis for common diseases with atypical presentation: descriptive research. JMIR Med. Educ. 10, e58758 (2024).

Google Scholar

Horiuchi, D. et al. Comparing the diagnostic performance of GPT-4-based ChatGPT, GPT-4V-based ChatGPT, and radiologists in challenging neuroradiology cases. Clin. Neuroradiol. 34, 779–787 (2024).

Kumar, R. P. et al. Can artificial intelligence mitigate missed diagnoses by generating differential diagnoses for neurosurgeons? World Neurosurg. 187, e1083–e1088 (2024).

Google Scholar

Chiu, W. H. K. et al. Evaluating the diagnostic performance of large language models on complex multimodal medical cases. J. Med. Internet Res. 26, e53724 (2024).

Google Scholar

Kikuchi, T. et al. Toward improved radiologic diagnostics: investigating the utility and limitations of GPT-3.5 Turbo and GPT-4 with quiz cases. AJNR Am. J. Neuroradiol. 45, 1506–1511 (2024).

Google Scholar

Bridges, J. M. Computerized diagnostic decision support systems—a comparative performance study of Isabel Pro vs. ChatGPT4. Acta Radiol. Diagn. 11, 250–258 (2024).

Shieh, A. et al. Assessing ChatGPT 4.0’s test performance and clinical diagnostic accuracy on USMLE STEP 2 CK and clinical case reports. Sci. Rep. 14, 1–8 (2024).

Google Scholar

Warrier, A., Singh, R., Haleem, A., Zaki, H. & Eloy, J. A. The comparative diagnostic capability of large language models in otolaryngology. Laryngoscope 134, 3997–4002 (2024).

Google Scholar

Han, T. et al. Comparative analysis of multimodal large language model performance on clinical vignette questions. JAMA 331, 1320–1321 (2024).

Google Scholar

Milad, D. et al. Assessing the medical reasoning skills of GPT-4 in complex ophthalmology cases. Br. J. Ophthalmol. 108, 1398–1405 (2024).

Google Scholar

Abdullahi, T., Singh, R. & Eickhoff, C. Learning to make rare and complex diagnoses with generative AI assistance: qualitative study of popular large language models. JMIR Med. Educ. 10, e51391 (2024).

Google Scholar

Tenner, Z. M., Cottone, M. & Chavez, M. Harnessing the open access version of ChatGPT for enhanced clinical opinions. Preprint at medRxiv (2023).

Luk, D. W. A., Ip, W. C. T. & Shea, Y.-F. Performance of GPT-4 and GPT-3.5 in generating accurate and comprehensive diagnoses across medical subspecialties. J. Chin. Med. Assoc. 87, 259 (2024).

Google Scholar

Savage, T., Nayak, A., Gallo, R., Rangan, E. & Chen, J. H. Diagnostic reasoning prompts reveal the potential for large language model interpretability in medicine. NPJ Digit. Med. 7, 1–7 (2024).

Google Scholar

Franc, J. M., Cheng, L., Hart, A., Hata, R. & Hertelendy, A. Repeatability, reproducibility, and diagnostic accuracy of a commercial large language model (ChatGPT) to perform emergency department triage using the Canadian triage and acuity scale. CJEM 26, 40–46 (2024).

Google Scholar

Yang, J. et al. RDmaster: a novel phenotype-oriented dialogue system supporting differential diagnosis of rare disease. Comput. Biol. Med. 169, 107924 (2024).

Google Scholar

Reese, J. T. et al. On the limitations of large language models in clinical diagnosis. Preprint at medRxiv (2023).

do Olmo, J., Logroño, J., Mascías, C., Martínez, M. & Isla, J. Assessing DxGPT: diagnosing rare diseases with various large language models. Preprint at medRxiv (2024).

Cesur, T., Gunes, Y. C., Camur, E. & Dağlı, M. Empowering radiologists with ChatGPT-4o: comparative evaluation of large language models and radiologists in cardiac cases. Preprint at medRxiv (2024).

Schramm, S. et al. Impact of multimodal prompt elements on diagnostic performance of GPT-4(V) in challenging brain MRI cases. Preprint at medRxiv (2024).

Gunes, Y. C. & Cesur, T. A comparative study: diagnostic performance of ChatGPT 3.5, Google Bard, Microsoft Bing, and radiologists in thoracic radiology cases. Preprint at medRxiv (2024).

Olshaker, H. et al. Evaluating the diagnostic performance of large language models in identifying complex multisystemic syndromes: a comparative study with radiology residents. Preprint at medRxiv (2024).

Hirosawa, T. et al. Diagnostic performance of generative artificial intelligences for a series of complex case reports. Digit. Health 10, 20552076241265215 (2024).

Google Scholar

Mitsuyama, Y. et al. Comparative analysis of GPT-4-based ChatGPT’s diagnostic performance with radiologists using real-world radiology reports of brain tumors. Eur. Radiol. (2024).

Yazaki, M. et al. Emergency patient triage improvement through a retrieval-augmented generation enhanced large-scale language model. Prehosp. Emerg. Care (2024).

Ghalibafan, S. et al. Applications of multimodal generative artificial intelligence in a real-world retina clinic setting. Retina 44, 1732–1740 (2024).

Google Scholar

Hager, P. et al. Evaluation and mitigation of the limitations of large language models in clinical decision-making. Nat. Med. 30, 2613–2622 (2024).

Google Scholar

Horiuchi, D. et al. ChatGPT’s diagnostic performance based on textual vs. visual information compared to radiologists’ diagnostic performance in musculoskeletal radiology. Eur. Radiol. (2024).

Google Scholar

Ríos-Hoyo, A. et al. Evaluation of large language models as a diagnostic aid for complex medical cases. Front. Med. 11, 1380148 (2024).

Google Scholar

Liu, X. et al. Claude 3 Opus and ChatGPT With GPT-4 in dermoscopic image analysis for melanoma diagnosis: comparative performance analysis. JMIR Med. Inform. 12, e59273 (2024).

Google Scholar

Sonoda, Y. et al. Diagnostic performances of GPT-4o, Claude 3 Opus, and Gemini 1.5 Pro in ‘Diagnosis Please’ cases. Jpn. J. Radiol. (2024).

Wada, A. et al. Optimizing GPT-4 Turbo diagnostic accuracy in neuroradiology through prompt engineering and confidence thresholds. Diagnostics 14, 1541 (2024).

Gargari, O. K. et al. Diagnostic accuracy of large language models in psychiatry. Asian J. Psychiatr. 100, 104168 (2024).

Google Scholar

Mihalache, A. et al. Interpretation of clinical retinal images using an artificial intelligence Chatbot. Ophthalmol. Sci. 4, 100556 (2024).

Google Scholar

Rutledge, G. W. Diagnostic accuracy of GPT-4 on common clinical scenarios and challenging cases. Learn Health Syst. 8, e10438 (2024).

Google Scholar

Ueda, D. et al. Evaluating GPT-4-based ChatGPT’s clinical potential on the NEJM quiz. BMC Digit. Health 2, 4 (2024).

Delsoz, M. et al. Performance of ChatGPT in diagnosis of corneal eye diseases. Preprint at medRxiv (2023).

Brin, D. et al. Assessing GPT-4 multimodal performance in radiological image analysis. Preprint at medRxiv (2023).

Levine, D. M. et al. The diagnostic and triage accuracy of the GPT-3 artificial intelligence model: an observational study. Lancet Digit. Health 6, e555–e561 (2024).

Google Scholar

Williams, C. Y. K. et al. Use of a large language model to assess clinical acuity of adults in the emergency department. JAMA Netw. Open 7, e248895 (2024).

Google Scholar

GPT-4V(ision) System Card. (2023).

Model Card Claude 3. (2024).

Gemini Team et al. Gemini 1.5: unlocking multimodal understanding across millions of tokens of context. Preprint at arXiv (2024).

GPT-4o(mni) System card. (2024).

Dubey, A. et al. The Llama 3 herd of models. Preprint at arXiv (2024).

Perplexity Model Card Perplexity (2024).

Üstün, A. et al. Aya model: an instruction finetuned open-access multilingual language model. Preprint at arXiv (2024).

Model Card Claude 2. (2023).

Model Card Claude 3.5. (2024).

Toma, A. et al. Clinical Camel: an open expert-level medical language model with dialogue-based knowledge encoding. Preprint at arXiv (2023).

Gemini Team et al. Gemini: a family of highly capable multimodal models. Preprint at arXiv (2023).

Glass version 2.0. GLASS (2024).

Han, T. et al. Comparative analysis of GPT-4Vision, GPT-4 and Open Source LLMs in clinical diagnostic accuracy: a benchmark against human expertise. Preprint at medRxiv (2023).

Han, T. et al. MedAlpaca—an open-source collection of medical conversational AI models and training data. Preprint at arXiv (2023).

Chen, Z. et al. MEDITRON-70B: scaling medical pretraining for large language models. Preprint at arXiv (2023).

Jiang, A. Q. et al. Mistral 7B. Preprint at arXiv (2023).

Jiang, A. Q. et al. Mixtral of experts. Preprint at arXiv (2024).

Zhang, V. NVIDIA AI foundation models: build custom enterprise Chatbots and co-pilots with production-ready LLMs. NVIDIA Technical Blog (2023).

Köpf, A. et al. OpenAssistant Conversations—democratizing large language model alignment. Preprint at arXiv (2023).

Xu, C. et al. WizardLM: empowering large language models to follow complex instructions. Preprint at arXiv (2023).

Wolff, R. F. et al. PROBAST: a tool to assess the risk of bias and applicability of prediction model studies. Ann. Intern. Med. 170, 51–58 (2019).

Google Scholar

Wahl, B., Cossy-Gantner, A., Germann, S. & Schwalbe, N. R. Artificial intelligence (AI) and global health: how can AI contribute to health in resource-poor settings? BMJ Glob. Health 3, e000798 (2018).

Google Scholar

Preiksaitis, C. & Rose, C. Opportunities, challenges, and future directions of generative artificial intelligence in medical education: scoping review. JMIR Med. Educ. 9, e48785 (2023).

Google Scholar

Clusmann, J. et al. The future landscape of large language models in medicine. Commun. Med. 3, 141 (2023).

Google Scholar

Ueda, D. et al. Fairness of artificial intelligence in healthcare: review and recommendations. Jpn. J. Radiol. 42, 3–15 (2023).

Google Scholar

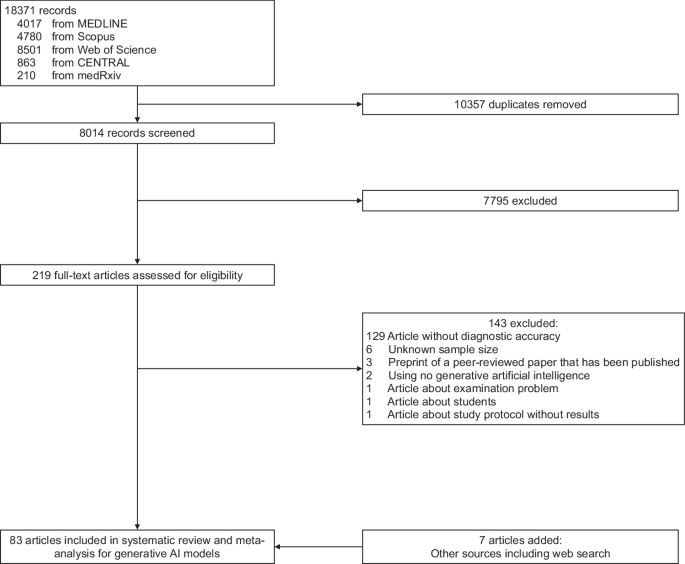

McInnes, M. D. F. et al. Preferred reporting items for a systematic review and meta-analysis of diagnostic test accuracy studies: the PRISMA-DTA statement. JAMA 319, 388–396 (2018).

Google Scholar

Moher, D., Liberati, A., Tetzlaff, J., Altman, D. G. & PRISMA Group. Preferred reporting items for systematic reviews and meta-analyses: the PRISMA statement. BMJ 339, b2535 (2009).

Google Scholar

Walston, S. L. et al. Data set terminology of deep learning in medicine: a historical review and recommendation. Jpn. J. Radiol. 42, 1100–1109 (2024).

Google Scholar

link