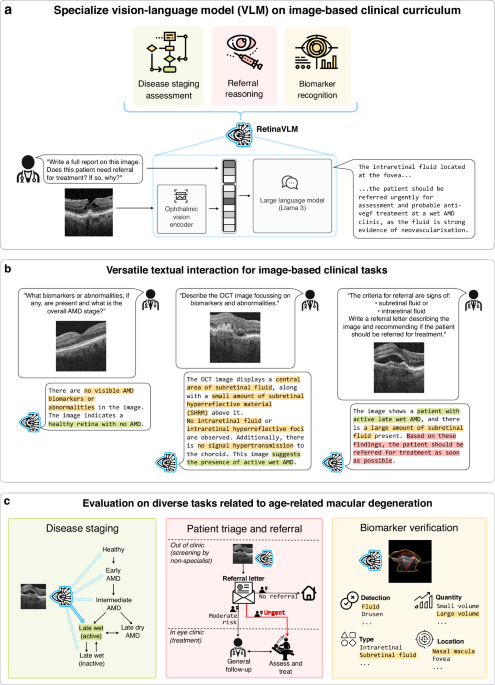

Retinal image dataset curation

We use two retinal OCT datasets in this study. The first, described in the “Retrospective patient dataset” section, contains a cohort of patients with AMD collected retrospectively at the Southampton Eye Unit. The second, described in the “External testing dataset of referred patients” section, contains scans of the initial visits of patients referred, primarily by opticians, to the Southampton Eye Unit. The curation and use of this data is summarized in a flowchart diagram in Supplementary Fig. 11.

All data was collected in the scope of the PINNACLE study (ClinicalTrials.gov NCT04269304), which received approval from the East Midlands-Leicester Central Research Ethics Committee in the United Kingdom (ref. 19/EM/0163) and the institutional review boards of all participating institutions. It complies with the principles of Good Clinical Practice and the Declaration of Helsinki. Informed consent procedures were followed according to the principles of Good Clinical Practice and the Declaration of Helsinki. All images were captured using Topcon 3D OCT scanners (Topcon Corporation, Tokyo, Japan). Both datasets contain images of size 416 × 512 with a pixel size of 3.5 × 11.7 μm2.

Retrospective patient dataset

The retrospective dataset contains 44,633 OCT images from 6152 eyes belonging to 3468 patients, collected over eight years, between 2012 and 2020, at the Southampton Eye Unit and curated by the PINNACLE consortium. For each OCT scan we use the mediolateral 2D slice centered at the fovea. We then designated 41,926 of the 44,633 OCT images from 5547 eyes of 3057 patients for training purposes. Additionally, we reserved 2,311 images from 326 eyes of 187 patients for validation, and 396 images from 279 eyes of 224 patients for testing. We ensured that images from each patient do not appear in more than one of the training, validation or test sets. The training set was used to create both curriculum parts 1 and 2, detailed in Sections Report curation and question-answer pair generation (curriculum part 1) and Report curation and question-answer pair generation (curriculum part 2). The retrospective test set was used to evaluate the resulting model in the disease staging and biomarker verification section in the Results.

To evaluate the accuracy of image report conclusions, we used a multi-rater process for curating high-quality labels. We now expand on this by detailing the breakdown of disease stage definitions used in this study. AMD remains a relatively poorly understood disease, with multiple grading systems that vary in stage classification and definitions40,41,42,43,44. Its classification remains an active area of research44. Classifying a retinal OCT image into a single disease stage is challenging, as overlapping features can indicate multiple AMD stages, often requiring ophthalmologists to make intuitive assessments beyond strict criteria. In this study, the ophthalmologists reported classifying healthy and early AMD based on the presence or absence of small drusen, and intermediate AMD by medium, or intermediate, to large drusen41. Late dry AMD was identified by atrophy of the retinal pigment epithelium, evidenced by hypertransmission. Presence of any subretinal hyperreflective material or other scarring advanced the classification to inactive wet AMD. Finally, presence of any subretinal or intraretinal fluid upgraded the image-based classification to active late wet AMD. These classifications yielded a testing set of 276 images that labeled 36 as healthy, 18 as early, 64 as intermediate, 25 as late dry, 75 as inactive late wet and 58 as active late wet AMD.

Each image was labeled with the presence or absence of 10 biomarkers, and an additional 21 labels that record their size, number, and other applicable attributes. Due to the substantially increased number of variables compared to disease staging, each image was labeled only once by one of the six junior ophthalmologists. This process yielded a testing set of 396 images that labeled 107 as evidencing drusen, 70 with pigment epithelial detachments, 150 with pigment epithelial elevation, 27 with double-layer sign, 122 with hypertransmission, 155 with atrophic/degenerated pigment epithelium layers, 60 with subretinal hyperreflective material, 81 with hyperreflective foci, 25 with subretinal fluid, and 62 with intraretinal fluid.

External testing dataset of referred patients

We also collected an external dataset of 95 patients that were referred primarily by opticians for urgent care at the Southampton Eye Unit between 02/2023 and 12/2023. None had yet received treatment for AMD, and mostly had no AMD, intermediate AMD or small features related to active wet AMD. This represents a distribution shift from the retrospective cohort, where many patients had already received treatment for AMD and were in the inactive late wet stage of AMD. As such, it enabled us to estimate the robustness of both variants of RetinaVLM to shifts in patient population. This dataset was not used for model training and was reserved for testing VLMs on patient referral as shown in Fig. 5.

For each patient we sourced scans of both their left and right eye that were acquired on their first visit to the clinic. We also collected the originally issued letter of referral, as depicted in Fig. 5d. Then, two junior ophthalmologists independently reviewed the 3D OCT volumes of both eyes to label the patient’s risk and decide from three levels of increasing referral referral urgency. To help standardize their labels, the ophthalmologists referred to a set of agreed patient referral guidelines. For full documentation see in Supplementary Fig. 10c.

After labeling the level of referral urgency, the ophthalmologists then selected the image slice that most supported their assessment of the patient’s risk. In healthy patients where both volumes contained no pathological signs in any of the image slices, they were instructed to select the mediolateral fovea-centered 2D slice from one of the two volumes. Finally, any inter-rater disagreements were then arbitrated by a panel involving the two senior ophthalmologists. Of the 95 images in the external cohort, 25 did not evidence need of referral, 41 indicated the patient was at moderate risk and needed general attention by a specialist, while only 29 indicated the patient needed urgent referral.

RetinaVLM vision-language model architecture

RetinaVLM follows the architectural design of MiniGPT424. It consists of two main components: an ophthalmological vision encoder and a generative LLM (see Supplementary Fig. 9). For the ophthalmological vision encoder, we adopt a Resnet50 convolutional neural network with over 23 million parameters which was pre-trained with self-supervised contrastive learning on the 41,926 OCT images from the train set of the retrospective cohort. Specifically, it was trained with Bootstrap Your Own Latent (BYOL)45 using the same implementation details as the standard contrastive approaches in21, which consistently performed on par with RETFound22 on data from the Southampton Eye Unit. This vision encoder projects each 192 × 192 input image to a set of spatially arranged 6 × 6 visual embeddings, which are extracted from the last layer before global average pooling. Each embedding has a dimension of himg = 2048. They also have a receptive field of size 336, so each embedding contains global knowledge of the image that is contextualized at its local position.

For the LLM, we employ the 8 billion parameter instruction-tuned Llama3 model by Meta23,46 as the generative LLM, which was was the most performant openly available model at the time of our study. LLama3 uses an embedding dimension of hlang = 4096.

The ophthalmological vision encoder provides visual information regarding the OCT image to the LLM via an adapter. The adapter is a linear layer of size himg × hlang that processes visual information for use by the LLM. Specifically, it does so by independently mapping each of the visual embeddings, applying a linear transformation via matrix multiplication, into language embeddings used by the LLM. The design and application of the adapter follows the design used in MiniGPT424, and results in an adapter with over 8 million parameters.

Foundation vision-language models

We used the two most widely adopted foundation vision-language models for medical applications at the time of this study9,10. They were both trained on large biomedical datasets sourced from the Internet, and have been applied in chest x-ray47. The first, Med-Flamingo10, which was built on Flamingo48 and finetuned on image and text data from medical textbooks and the PubMed Central Open Access (PMC-OA) dataset49. The second, LLaVA-Med9, developed by Microsoft, is a VLM built on LLaVA18 and finetuned to follow textual instructions regarding a broad range of biomedical images contained in PubMed Central 15M (PMC-15M)50. The training sets of both models contain retinal OCT images and associated text. As they were trained as generalist models on various imaging modalities, they were both purportedly capable of interpreting retinal OCT images. When provided a retinal OCT image we found both could correctly identify its modality and begin generating diagnoses, without being provided any prior information. More recently, other generative vision-language models for ophthalmology have been introduced but were either not designed for OCT imaging nor finetuned with instruction-tuning51,52. Our third baseline constitutes OpenAI’s GPT-4o model (endpoint ‘gpt-4o-2024-05-13’). Unlike the two aforementioned medical VLMs, GPT-4o is a generalist model.

For Med-Flamingo, we then provide instructions using the following template provided in their code, replacing {question} with the instruction text:

You are a helpful medical assistant. You are being provided with images, a question about the image and an answer. Follow the examples and answer the last question.

Similarly, for LLaVA-Med we use the following conversation template provided by the developers:

A chat between a curious human and an artificial intelligence assistant. The assistant gives helpful, detailed, and polite answers to the human’s questions.###Human: {question}###Assistant:

Similarly, for the ChatGPT-4o API we provide the following system prompt:

You are an intelligent and helpful assistant. You follow all the instructions in the user prompt, and answer all the questions they ask for.

Report curation and question-answer pair generation (curriculum part 1)

To create the tabular reports for the first part of the curriculum we used a cluster-based approach to efficiently label the 41,926 training images with biomarker annotations53. This training cohort includes 25,825 female and 16,101 male patients with an average age of 81.8 years, with lower (Q1) and upper (Q3) quartiles of of 77.0 and 88.0 years.

Contrastive learning is used to extract self-supervised features from the dataset. The dataset is then partitioned into 40 clusters of images that share common features. Labels are then assigned to these clusters by senior ophthalmologists. To this end, 20 images from each cluster were reviewed by senior ophthalmologists. If the majority of the images exhibited common features, such as ’large drusen’ or ’subretinal fluid’, these labels were assigned to the entire cluster. A review quantifying the accuracy of each cluster description is performed in Supplementary Section A.4.

These labels were used in in combination with the patient’s age, sex and their functional visual acuity score (measured on a LogMAR chart and converted to Letter score) to create the tabular reports. We also included the quality index of the image using an intensity-histogram-based approach54. We found that labeling the dataset’s quality index quartiles as ‘very poor’, ‘ok,’ ‘good,’ and ‘excellent’ effectively captured the characteristics of each quartile. Additionally, the reports list three biomarkers that are stated as not being present. These are drawn from a distribution of all biomarkers, weighted by their prevalence in the dataset, that were not among the cluster labels for that image. Counts of the prevalence of each tabular variable among the training images are shown in Supplementary Fig. 1a, b, and a sample of four tabular reports they result in are shown in Supplementary Fig. 1c.

To generate question-answer pairs from the large volume of tabular reports we used an LLM available for download and local use. We chose WizardLLM-70B, though any freely-available LLM with instruction-following capabilities may be used for this purpose. This model was chosen as an open-weights model with strong performance in instruction following and general knowledge at the time of dataset creation. We then used the instruction template detailed in the “Question-answer generation prompt (curriculum part 1)” section in the Supplementary material. This resulted in a total of 408,505 question-answer pairs, or an average of 9.75 question-answer pairs per report depending on its complexity. Examples of the question-answers pairs generated by this approach are shown in the ‘curriculum part 1’ section of Supplementary Fig. 4a, b.

Report curation and question-answer pair generation (curriculum part 2)

The second part of the curriculum involves further turning on a subset of 330 images from the training curriculum in part 1. This training cohort includes 205 female and 125 male patients with an average age of 80.8 years, with lower (Q1) and upper (Q3) quartiles of of 75.0 and 87.0 years.

Unlike curriculum part 1, images used in curriculum part 2 were annotated with high quality reports manually curated by retinal specialists. Specifically, two junior ophthalmologists were tasked with describing the main pathological biomarkers and diagnoses related to AMD, while also noting any other observation regarding the retinal anatomy. This yielded high-quality textual reports that go beyond the short notes that ophthalmologists typically write in clinical routine. The first junior ophthalmologist, with three years of experience specializing in ophthalmology, wrote the majority of 244 reports (see Supplementary Fig. 2a). While these were highly accurate, they were less comprehensive in their analysis than the remaining 86 reports written by the junior ophthalmologist with 10 years of experience (see Supplementary Fig. 2b).

Simultaneously, the same two junior ophthalmologists used the same methodology to produce 28 reports on images from the test set. In our analysis, these were found to be of high quality by the two senior ophthalmologists (see Fig. 4). As they were representative of the 330 reports collected on the training set, the senior ophthalmologists concluded that these results provide sufficient quality assurance for the reports used to generate curriculum part 2.

After the imaging reports were curated, we used an external LLM with 10 different instructions to generate up to 230 questions per image. The exact instructions used are documented in the “Question-answer generation prompts (curriculum part 2)” section in the Supplementary material. We take two preliminary steps before providing the instruction to the LLM. We firstly replace the identifier with the raw text of the image report. Additionally, many of the QA generation instructions make references to the guidelines that describe the mandatory capabilities of image-based clinical decision makers with regard to disease staging and patient referral for patients with AMD. The second step involves replacing any reference to the , or by the text of the corresponding guidelines (documented in Supplementary Fig. 10). These guidelines were verified by senior ophthalmologists, and were instrumental for the generation of questions with improved diversity and coverage, and also for creating questions about biomarkers that were absent in the image (and typically not mentioned in the report).

The smaller number of reports in the advanced curriculum permitted the use of the more performant proprietary models for generating question-answer pairs. We used the ‘gpt-4o’ API endpoint from OpenAI. An example interaction with ’gpt-4o’ for generating these question-answer pairs is shown in Supplementary Fig. 3. A sample of question-answers yielded by this approach are shown in the ‘part 2’ section of both Supplementary Fig. 4a, b.

RetinaVLM model inference

RetinaVLM processes retinal OCT images and textual instructions. To begin building the input provided to the model, we first set the system prompt of the constituent LLM to:

You are a helpful ophthalmological specialist chatbot capable of interpreting retinal OCT images.

We then begin the instruction that will be provided to the LLM with the following line:

Here is an encoding of a retinal OCT image \n

For each image in the batch, we next add the text of the specific question to the instruction. For example:

Describe the OCT image in detail. Does it show evidence of retinal fluid?

To complete the textual input to the model, we populate the LLM’s conversation template with this instruction and include the question’s answer exclusively during training. This forms the full textual input, which we project to the embedding space of the LLM using its pre-existing tokenizer and pretrained embedding layer.

After embedding the input text, we process the retinal OCT image in question. To this end we downsample the image by a factor of 2 from 416 × 512 to 208 × 256 pixels. We then crop the image centrally in testing, and augment each image using the finetuning protocol outlined in ref. 21 in training. This results in a processed OCT image of size 192 × 192.

Next, we use the ophthalmic vision encoder E to extract the 6 × 6 vision feature embeddings from the image. After flattening these, we apply the adapter to each embedding separately. This projects the visual embeddings to the input embedding space of the LLM. To create the final set of embeddings that are provided to the LLM, we replace the textual embeddings corresponding to the phrase with the 36 adapted vision embeddings from the actual retinal OCT image.

Finally, the resulting sequence of language embeddings and adapted visual embeddings are passed together through the frozen LLM. This yields a list of predicted token logits with the same length as the input sequence. We then compute the causal language modeling loss between the predicted answer logits and the ground truth answer tokens. We then optimize the adapter to minimize this loss.

Crucially, when training RetinaVLM we keep both the vision encoder and LLM frozen. That is, they are not updated during the entire training process. This is key to preserving RetinaVLM’s inheritance of the pretrained LLM’s language and reasoning capabilities. These enable RetinaVLM to handle versatile questions and instructions beyond those encountered in the curriculum during training. Thus, during training, we only update the adapter that feeds visual information regarding the OCT image to the LLM.

Training RetinaVLM on curriculum parts 1 and 2

RetinaVLM is trained sequentially on curriculum part 1 and part 2. After randomly initializing the adapter we first train the model on the 408,545 question-answer pairs regarding the 41,926 images in curriculum part 1 (introduction to retina) to obtain RetinaVLM-Base. The then continue to finetune the model on the 71,165 questions and answers regarding the 330 images in curriculum part 2 (advanced retinal specialism), resulting in the final RetinaVLM-Specialist model. For each of the curriculum parts we train RetinaVLM for 100,000 steps using a batch size of 12. In each step we randomly select one question-answer pair per image from the current curriculum. To update the network we use the AdamW optimizer with a learning rate of 10−4, and β1 = 0.9 and β2 = 0.999.

Training on the two curriculum parts separately is important for assessing the accumulative benefits of progressively specialist training in two ways. Firstly, this enables us to measure the benefits of training on free-text medical reports over tabular data. Secondly, during development we found that reserving the highest quality data for the final training stage, curriculum part 2, improved the performance of the resulting model. This strategy is standard practice in the development of LLM-based models17.

Experimental setup

After VLMs have been trained on image and text datasets, they can be used to generate responses to new questions and images. To use the baseline foundation VLMs for testing we take the central crop in each 416 × 512 OCT image, resulting in an image of size 384 × 384. After repeating this image along the color dimension, ChatGPT-4o accepts the resulting 3 × 384 × 384 RGB image, while the Med-Flamingo and LLaVA-Med baselines require we downsample this to 3 × 224 × 224. To provide the image to the RetinaVLM variants, we first downsample the 416 × 512 image by a factor of two to 208 × 256, before taking a central crop resulting in an image of size 192 × 192.

We then employ the same method with all VLMs for generating responses to instructions. Provided the image and textual instruction, we build the output sequence of tokens by repeatedly appending the token to the output assigned the highest probability by the VLM. This is equivalent to using a temperature parameter set to 0, and is the standard approach for generating the most accurate and least creative output from LLM-based models. All other generation parameters, such as repetition penalty, were not used. This process is repeated until a stop token is generated, signaling the end of the VLM’s response. The model’s tokenizer is then used to convert the numeric output tokens to the final free text output.

Our entire evaluation was conducted in zero-shot, that is, after training on curriculum part 1 and part 2 RetinaVLM requires no further finetuning in order to perform tasks related to disease staging, patient referral and biomarker analysis. Instead, for each test we designed a specific instruction that was provided to all VLMs to generate the application-specific reports that were used in our analyses. These instructions were derived through experimentation with all VLMs on the validation set, and are documented in the following four subsections. To inform their design, we assessed the effectiveness of each prompt by assessing the quality of outputs it produced in all VLMs on a subset of 30 images from the validation set. This led to two observations. Firstly, that all VLMs produced improved responses when prompted to first detail its ‘chain-of-thought’55. This led us to request that the model first describe the OCT image in detail before making any recommendations. Secondly, that simpler, more direct prompts resulted in all VLMs making fewer errors in following our subsequent, test-specific instructions.

Report generation for disease staging

The following instruction was given to all VLMs to and obtain the reports of the 276 test images that were analyzed in Fig. 3. The instruction requests VLMs to begin by describing the image, and then deduce the most advanced disease stage:

Describe the OCT image in detail and list any biomarkers or abnormalities, including the most likely AMD stage of the patient.

Then, based on those observations, state if the patient’s most advanced AMD stage is “healthy’, “early’, “intermediate’, “late dry’, “late wet (inactive)’ or “late wet (active)’?

After the VLM generated its report (using a maximum of 500 tokens), we appended the following incomplete sentence to its output:

Based off the image and those findings, the patient’s most advanced AMD stage is

which the VLM them completes using up to 300 tokens. From these tokens, we extracted the final disease staging prediction by searching for the first instance of any of the listed disease stages. This post-processing step is only necessary for quantitative tests of accuracy, as it enables the reliable extraction of the disease stage from the free text report. In cases where the VLM discusses multiple disease stages, such as in ‘more advanced than early AMD, and is intermediate AMD as there is no evidence of late wet AMD’, the disease stage was manually extracted. In cases where no disease stage was provided or could be extracted manually, this counted as an ‘Invalid response’.

Report generation for qualitative evaluation

For the qualitative evaluation by the senior ophthalmologists, we randomly selected 28 of the test images from the retrospective dataset, and tasked the two junior ophthalmologists with annotating the images. We provided the following instruction to RetinaVLM-Specialist and LLaVA-Med to generate their image reports:

Write an extensive report describing the OCT image, noting any biomarkers or abnormalities related to AMD, and their qualities. Also comment on which biomarkers are absent.

Finally, based on the image and these findings, your report should estimate the AMD disease stage of the patient.

You should not include any patient referral recommendations in your report, but you can comment if they need treatment with anti-vegf.

We found that ChatGPT-4o tended to write excessively long reports. To improve the performance of ChatGPT-4o in direct evaluations by senior ophthalmologists, we requested a ‘brief’ rather than an ‘extensive’ report, and appended the following text to the original instruction: ‘Your report should be no longer than 120 words long’. This helped make reports more concise with no observable loss in accuracy, though they still exceeded the length of reports written by junior ophthalmologists and RetinaVLM-Specialist.

We then assigned 14 of the images and the corresponding 42 reports by ChatGPT-4o, RetinaVLM-Specialist and the junior ophthalmologists, to each of the two senior ophthalmologists, who evaluated them independently according to the correctness, completeness and conciseness. These criteria, also documented in Fig. 4, were:

-

Correctness – The report is accurate in its main observations and conclusions regarding the image

-

Completeness – The report contains all relevant observations and conclusions that can be inferred from the image

-

Conciseness – The report does not make observations and conclusions that are not supported by, or not seen in, the image

Report generation for patient referral

The following instruction was given to all VLMs to generate reports that focus on patient referral recommendations, which are analyzed in Fig. 3. This instruction was run for the 95 referral images, introduced in the “External testing dataset of referred patients” section. In order to accurately convey the specific requirements of the wet AMD treatment clinic, we provided the comprehensive referral protocol used by the senior ophthalmologists in the instruction:

Do not provide a disease stage, or referral recommendation yet.

Being seen by a specialist at the Southampton clinic:

-

A.

The Southampton clinic requires that patients with any sign of intraretinal fluid, any sign of subretinal fluid, or any sign of cyst(s), MUST be seen by a specialist at the Southampton clinic within the next two weeks.

-

B.

The Southampton clinic requires that patients who do not have any sign of intraretinal fluid, any sign of subretinal fluid, or any sign of cyst(s), but do have some biomarkers of early or intermediate AMD, should be seen by a specialist at the Southampton clinic for routine referral.

-

C.

The Southampton clinic requires that patients who do not have any sign of intraretinal fluid, any sign of subretinal fluid, or any sign of cyst(s), but do have medium to large drusen, drusenoid PED, hypertransmission or atrophy, should be seen by a specialist at the Southampton clinic for routine referral.

-

D.

The Southampton clinic does not need to see patients who have no biomarkers and healthy retinas at all.

Southampton specialist visit: Next, tell me if your initial report of the OCT image indicates that the patient should be seen by a specialist at the Southampton clinic “within the next two weeks”, to be seen “within 18 weeks (routine referral)”, or “not be seen” at all?

As before, after the VLM generated its report (using a maximum of 500 tokens), we added the following incomplete sentence to its output:

My report indicates that the patient

which is then completed by the VLM for up to a maximum of 300 tokens. We then searched these tokens for the first instance of one of the three levels of referral urgency, ‘within the next two weeks’, ‘within 18 weeks (routine referral)’ or ‘not be seen’, in the VLM’s output report. In cases where different wording is used, but with identical meaning, the VLM’s prediction is extracted manually. In the remainder of cases, it is listed as an ‘Invalid response’.

Report generation for biomarker analysis

The following instruction was given to all VLMs to generate reports that conclude the presence of absence of the 10 different biomarkers, evaluated on the 396 test images in Fig. 6:

Describe the OCT image in detail and list all biomarkers or abnormalities. Detail if there are any signs indicating that biomarker might be present, even if there is only a small amount.

Finally, conclude your findings by telling me if biomarker article “not present”, or if potentially any amount of biomarker article “present” in the OCT image.

For each of the 10 biomarkers, the phrase {biomarker} was replaced by the actual biomarker name (such as ‘subretinal fluid’), and the {article} replaced by ‘is’ for singular biomarkers or ‘are’ for plural biomarkers (such as drusen). After the VLM generated its report (using a maximum of 500 tokens), we appended the following incomplete sentence to its output:

To conclude these findings, in the OCT image {biomarker} {article}

which the VLM then completes using up to 300 tokens. We then searched for the first instance of not present or present to extract the model’s prediction of the absence or presence of the biomarker, respectively. In cases where different wording is used, but with identical meaning, such as stating the biomarker was ‘detected’ rather than ‘present’, the VLM’s prediction is extracted manually. In the remainder of cases, it is listed as an ‘Invalid response’.

Computing language-based image saliency maps

We provide methodogolical details for the computation of the language-based saliency maps shown in Fig. 6d and Supplementary Fig. 8. With saliency maps we aim to identify which regions of the image were most relevant to certain passages, such as large subretinal fluid, of RetinaVLM-Specialist’s responses. The most direct way to generate these visualizations to use attention maps, but we found Llama3’s pretrained attention maps did not result in any meaningful saliency maps. To address this, we used Grad-CAM31, a technique for highlighting the most relevant image regions to the prediction of an image classifier. Specifically, we apply Grad-CAM to the sum of the tokens in the output passage, effectively reframing the LLM as an image classifier where the output corresponds to a specific passage of the report. This allows us to generate saliency maps relative to that passage. A code implementation can be found at the repository referenced in the “Code availability” section.

Comparison to an image-only classification-based deep learning baseline

VLMs are an emerging technology that hold great potential to automate language-based reporting and decisions regarding medical images. To provide a comparison between VLMs and more established approaches to classification we use RETFound, which was pretrained on over 700,000 2D OCT images and exhibits strong performance when finetuned on as few as 100 images21,22. RETFound makes predictions from the image alone and cannot interpret nor respond to written language. As an image encoder, RETFound instead outputs a single classification per image.

We compare the VLMs against RETFound in both the disease staging and patient referral tasks. To this end, we formulated disease staging as a six-way classification task using the same stages as the experiment shown in Fig. 3. Similarly, we formulated the patient referral task as a three-way classification task using the same referral levels as the experiment shown in Fig. 4. In both cases, we manually extract training labels from the imaging reports of the same 330 training images used in curriculum part 2 to train RetinaVLM-Specialist (see the “Retrospective patient dataset” section).

After formulating these tasks, we used the standard linear evaluation approach to evaluate the performance of the RETFound imaging encoder. This involves applying global average pooling to RETFound’s output tokens to yield a feature vector of size 1024. We then train a linear layer that maps this feature vector to the output classes of the given classification problem. This was done using an AdamW optimizer with learning rate of 3⋅10−4 for 5000 training steps. In both cases, performance converged and little to no overfit was observed on the validation set. We then selected the step with the best performance on the validation set for evaluation. Finally, this classifier is tested on the same arbitrated test labels as all VLMs in Figs. 3 and 4.

The results of both experiments are shown in Supplementary Fig. 6a. For the disease staging task we find that RETFound (0.63 F1) significantly outperforms the best performing foundation medical VLMs (0.11 F1) and RetinaVLM-Base (0.30 F1). Notably, it performs as well as RetinaVLM-Specialist (0.63 F1). However, on the patient referral task RETFound (0.37 F1) performs on par with the best foundation VLM (0.39 F1) and significantly worse than RetinaVLM-Specialist (0.67 F1).

The equal performance of RetinaVLM-Specialist and the image-only RETFound baseline in disease staging implies that both have reached an upper bound on performance for this task on the retrospective dataset. However, we observed relatively poor performance from the image-only baseline on the patient referral task, which we attribute to domain shift. Specifically, the retrospective dataset lacks images with intact retinal pigment epithelium layers that feature small fluid pockets, which represent many of the urgent referral cases in the referral dataset. This image-only model’s ability to generalize well to these cases. While accurate patient referral is achievable using an image-only encoder like RETFound, addressing this domain shift would necessitate the collection of a new training dataset that is more representative of the referral cohort. In contrast, RetinaVLM-Specialist is better able to mitigate this domain shift by utilizing task-specific textual instructions that provide details about the target domain – namely, the patient referral protocol outlined in the “Report generation for patient referral” section.

Measurements of performance and statistical analysis

To calculate the performance of each VLM and retinal specialist in all multiple-choice question answering, we used the micro F1 score. This aggregates the total number of false positives (FP), false negatives (FN), true negatives (TN) and true positives (TP) over all classes before computing the F1 score using Eq. (1)

$${F}_{1}=\frac{2\cdot TP}{2\cdot TP+FP+FN}$$

(1)

In cases where the VLM returned an ‘Invalid response’ by failing to pick one of the listed options this was counted as a false negative for the ground truth class. After calculating the F1 score, we determined the 95%confidence interval through bootstrapping N = 1000 times with replacement.

Tests of significance (aggregated in Supplementary Fig. 6) were calculated using a two-sided McNemar’s test56. This test assesses the difference in the number of correctly versus incorrectly predicted samples, focusing on cases where the models agree or disagree on the labels. A significant p-value from the McNemar test allows us to reject the null hypothesis that both models have identical classification performance. We then used the following notation to indicate levels of statistical significance: *** for p ≤ 0.001, ** for p ≤ 0.01, and * for p ≤ 0.05 and ‘ns’ (not significant) for p > 0.05.

Computing hardware and software

We use Python 3.12.2 to conduct all model question-answer generation, VLM training, and VLM evaluation. To generate the question-answer pairs for curriculum part 1 we used 3 40GB NVIDIA A40 GPUs. For both training RetinaVLM and for evaluating all VLMs we use a single 80GB NVIDIA A100 GPU and PyTorch57 version 2.1.2. Training RetinaVLM on takes 1 day on curriculum part 1, and another day on curriculum part 2. Llama3 was downloaded via Huggingface with model ID ‘meta-llama/Meta-Llama-3-8B-Instruct’. The baseline VLM Med-Flamingo’s code and model weights were installed following the instructions at and LLaVA-Med’s from Confusion matrices and results calculations were computed with scikit-learn version 1.4.1 and numpy version 1.26.4. Figures and tables were created in draw.io v24.4.0 using plots generated by matplotlib version 3.8.4 and seaborn version 0.13.1. Grad-CAM was computed using grad-cam version 1.5.0. McNemar’s tests of significance were calculated using statsmodels version 0.14.1.

link